This study material is compiled from a lecture audio transcript and copy-pasted text provided by the user.

Understanding Syntax Analysis and Parsing in Language Implementation

📚 Introduction to Language Processing

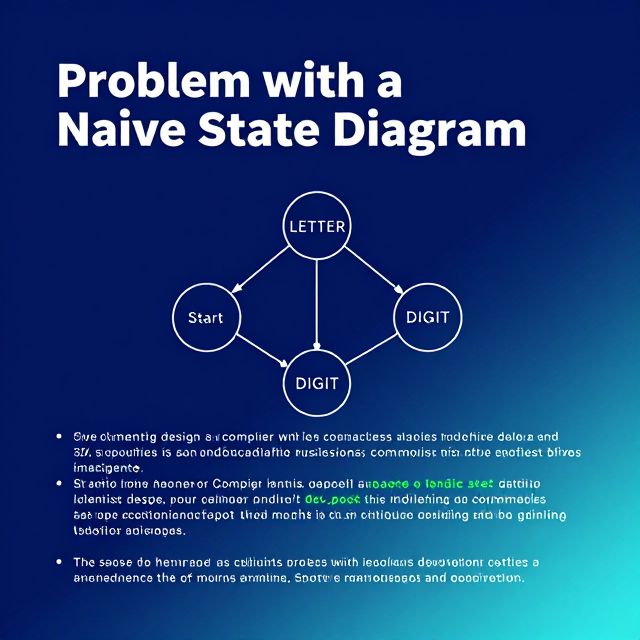

The implementation of programming languages relies heavily on a series of processing stages, with syntax analysis being a fundamental and indispensable component. This stage ensures the structural correctness of code, making it comprehensible to machines. For anyone delving into computer science or software development, grasping these concepts is crucial for understanding how programming languages are processed from source code into executable forms.

1️⃣ The Precursor: Lexical Analysis

Before syntax analysis can begin, the raw input program undergoes lexical analysis. A lexical analyzer acts as a sophisticated pattern matcher. Its primary role is to:

- Scan the input program (a stream of characters).

- Isolate its small-scale parts, known as tokens.

- Tokens are the fundamental building blocks of a program, such as keywords (e.g.,

if,while), identifiers (variable names), operators (e.g.,+,-), and literals (e.g.,123,"hello"). - By recognizing predefined patterns, the lexical analyzer transforms a raw stream of characters into a meaningful sequence of tokens, which then serves as input for the parser.

2️⃣ Syntax Analysis (Parsing): Ensuring Structural Correctness

Syntax analysis, often referred to simply as parsing, is the process of analyzing a sequence of tokens to determine its grammatical structure according to a given formal grammar. It is a common and critical part of any language implementation.

✅ Role and Output

- Ensures Structural Correctness: The parser verifies that the sequence of tokens forms a valid program structure based on the language's grammatical rules.

- Produces a Parse Tree: A successful syntax analysis generates a parse tree (also known as a derivation tree). This hierarchical data structure visually represents the grammatical structure of the input program, showing how tokens group together to form phrases and statements.

- Foundation for Later Stages: The parse tree is then utilized by subsequent compiler phases, such as semantic analysis (checking meaning) and code generation (translating to machine code), to further understand and transform the program.

⚠️ Detecting Syntax Errors

A crucial function of syntax analysis is its ability to detect syntax errors. These are violations of the grammatical rules of the programming language. When the parser encounters a sequence of tokens that does not conform to the language's defined grammar, it flags a syntax error, preventing the compilation or interpretation of ill-formed code.

3️⃣ Parsing Methodologies: Top-Down vs. Bottom-Up

There are two primary approaches to parsing: top-down and bottom-up.

📈 Top-Down Parsing: LL Parsers

Top-down parsers attempt to construct a parse tree by starting from the root (the grammar's start symbol) and working downwards to the input tokens.

- Recursive-Descent Parser: A common example of a top-down parser.

- LL Parser: Recursive-descent parsers are classified as LL parsers.

- The first 'L' stands for scanning the input from Left to right.

- The second 'L' stands for producing a Leftmost derivation.

- Approach: This type of parser typically uses a set of recursive procedures, one for each non-terminal in the grammar, to attempt to match the input to the grammar rules from the top down.

📊 Bottom-Up Parsing: LR Parsers

Bottom-up parsers construct a parse tree by starting from the input tokens (leaves) and working upwards towards the root (the grammar's start symbol).

- LR Parser: A key characteristic of an LR parser, which belongs to the bottom-up family, is that it traces a rightmost derivation in reverse.

- A rightmost derivation expands the rightmost non-terminal in each step until a complete string of terminals is formed.

- An LR parser works backward from the input string, identifying substrings that correspond to the right-hand side of a grammar rule, and then reducing them to the non-terminal on the left-hand side.

💡 The Core Problem: Finding the Handle

The central parsing problem for bottom-up parsers is finding the correct substring of the current sentential form that corresponds to a handle.

- A handle is a substring that matches the right-hand side of a production rule and whose reduction represents one step in the reverse of a rightmost derivation.

- Identifying this handle at each step is critical for correctly building the parse tree from the leaves up to the root.

⚙️ Shift-Reduce Parsers: The LR Family

Among the various bottom-up parsing techniques, the LR family of shift-reduce parsers stands out as the most common and powerful approach. These parsers operate by performing two fundamental actions:

- Shift: Moves input symbols onto a stack. This action effectively "reads" the next input token and pushes it onto a parsing stack.

- Reduce: Replaces a recognized handle on the stack with its corresponding non-terminal. When the top of the stack contains a sequence of symbols that matches the right-hand side of a grammar production, those symbols are "reduced" (popped) and replaced by the non-terminal on the left-hand side of that production.

Example of Shift-Reduce Actions:

Consider a simple grammar rule: E -> id (Expression can be an identifier).

If the input is id and the stack is empty:

- Shift: The parser shifts

idfrom the input onto the stack. Stack:[id] - Reduce: The parser recognizes

idon the stack as the right-hand side ofE -> id. It reducesidtoE. Stack:[E]

This robust and efficient method makes LR parsers widely used in modern compiler construction due to their ability to handle a large class of grammars and their deterministic nature.

✨ Key Takeaways

- Lexical Analysis tokenizes input, while Syntax Analysis (parsing) checks grammatical structure.

- Syntax Analysis detects syntax errors and produces a parse tree.

- Top-Down Parsers (e.g., LL parsers like recursive-descent) build the tree from the root down, using a leftmost derivation.

- Bottom-Up Parsers (e.g., LR parsers like shift-reduce) build the tree from the leaves up, tracing a rightmost derivation in reverse.

- The core challenge for bottom-up parsers is identifying the handle for reduction.

- Shift-Reduce Parsers are the most common LR approach, using 'shift' to move input to a stack and 'reduce' to apply grammar rules.